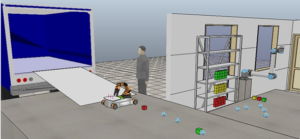

The current challenge is to address various safety critical cases that may occur in automated warehouses (later on referred to as the “scene”) that contain different mechanisms for handling goods that involve multiple objects ranging from simple robot platforms with constrained capabilities to more complex ones, such as robotic arms, to cameras and other identification mechanisms, to self-driving vehicles that ultimately deliver goods to their final destination, as illustrated in Figure 59. The proposed setup is a life-cycle methodology for wirelessly connected cyber-physical system by definition since the warehouse has in addition human workers and other vehicles (such as trucks), working alongside these robotic devices. Moreover, the safety critical cases can occur in different parts of the process and may manifest themselves physically (e.g. device malfunctioning) or virtually (e.g. software error that may produce non expected behaviour).

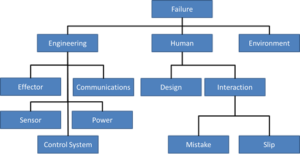

Safety issues in robotic scenarios can be divided into three main categories: engineering errors, human mistakes and poor environmental condition, as illustrated in Figure 60. In such complex environments, different safety critical requirements can be identified. For instance, the safety requirements for an industrial robot, such as a robotic arm, can be very different from those of a mobile robot. Furthermore, highly dynamic scenarios where humans interact with the robotic devices impose even more safety constraints to the environment.

For instance, in the example provided in Figure 60 three different types of robots are interacting in order to achieve the goal of loading a truck with specific products that are stored in a shelf at the warehouse. A problem with the robotic arm effector, e.g., its joints might be loose, could produce unexpected movements that could damage other tools, or even humans, around it. In dynamic scenarios, robotic tools depend highly on information coming from its sensors to build a trustworthy world map, without which even a well-designed control algorithm might end up producing dangerous behavior. When robots are expected to interact with humans a common approach is to employ smaller ones with lower mass, so that even if impacts occur they would not be harmful to the person being hit or pinched. However, due to the nature of the task this is not always an option, and bulkier hardware must be employed. In these cases a number of strategies can be used, such as defining maximum speed limits, collision detection, variable compliance (stiffer for slower movements but compliant for faster movements), and etc. It is also important to realize that in such complex environments different safety critical requirements can be identified. For instance, the safety requirements for an industrial robot, such as the mentioned robotic arm, can be very different from those of a mobile robot. Furthermore, highly dynamic scenarios where humans interact with the robotic devices impose even more safety constraints to the environment.

This use case will focus on using an interoperable format in order to collect and monitor the state of the different assets available in the “scene”. The information that is recorded will be combined and processed by formal models that aim at asserting safe against unsafe behaviour (e.g. by assessing the logical distribution of the robotic applications and functionalities, where and how safety algorithms should be deployed and executed, and etc.). In a subsequent step this information will be used proactively in order to be able to detect such issues ahead of time either locally on the device or remotely in the cloud, depending on the amount of input that is needed and immediacy when it comes to reacting, thus shifting between edge and cloud computing but also taking into consideration the possibility of human intervention locally or remotely.

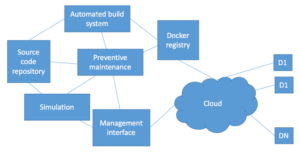

Figure 61 provides a sneak peek into the platform that we aim to exercise within this use case. It consists of the “usual” components one would use in order to automatically deploy software to x86 and other platforms. However, it is extended to take into consideration docker containers to enhance performance but also for compartmentalizing different execution environments. Moreover, it also uses a semantic repository which should be capable of allowing for richer queries on top of the different components defined in the source code repository.